Machine Learning is one awe-inspiring technology that has got everyone talking! As a result, researchers from all across the planet are trying to know more and better about this domain.

ML is a subset of AI (Artificial Intelligence). Where AI refers to any kind of intelligent machine, ML, on the other hand, refers to a particular type of AI that learns by itself!

ML is precisely what its name is – when machines learn, i.e., the science of getting computers to act without explicitly programming them. Instead, it focuses on using algorithms and data to enable machines to learn in a way humans do. One paradigm of this technology is RL – Reinforcement Learning.

There are two other paradigms, but we will stick with RL for today’s piece and learn more about this wonderful solution. So, let’s get to the fundamentals already!

What is Reinforcement Learning in Machine Learning?

First things first – what is RL?

Since it is a technical and scientific topic, we can’t move without actually understanding what RL is; so, it is only mandatory to discuss the basic definition first!

Reinforcement Learning can be seen as the Freudian approach to psychology – about rewarding and punishing the subject to get the desired result. RL is precisely that but with machines.

It is defined as a machine learning method that enables an agent to learn through trial and error in an intelligent environment. It involves rewarding or penalising the artificial intelligence for the actions it performs. For instance, if the machine does what the programmer wants, it is rewarded and if it doesn’t, it is penalised. The ultimate goal is to maximise awards.

This neural network method helps to learn ways to attain complex objectives over many steps.

Key Terms You Must Know in Reinforcement Learning

There are few terms that one must be aware of when dealing with RL. They can be considered the scientific jargons related to this domain. Let us get to know them.

- Environment (e) – the scenario that the agent faces.

- Model of the environment – it mimics behaviour of the environment.

- Model based methods – a method used for solving reinforcement learning problems.

- State (s) – present situation returned by the environment.

- Agent – the entity that performs actions in an environment to get rewards.

- Reward (R) – an immediate gift given to the agent on performing a specific task.

- Policy (π) – the strategy of the agent to decide its next action, based on the current status.

- Value (V) – the expected long-term return with discount.

- Value Function (V) – specifies the value of a state that is the total amount of reward.

- Q value or action value (Q) – it is similar to value, but it takes an additional parameter as a current action.

What are Popular Reinforcement Learning Algorithms

Q-learning

It is a value-based, model-free, off-policy learning algorithm. Here, the agent receives no policy. This means that the exploration of an agent of its environment is self-directed!

Deep Q-Networks

This algo utilises neural networks and RL techniques. Here self-directed exploration of the RL environment. The future actions are determined by a random sample of past beneficial action.

PPO (Proximal Policy Optimisation)

The PPO algorithm was introduced in 2017 and quickly usurped the Deep-Q learning method. This involves collecting a batch of environment interaction experiences and then updating the decision-making policy!

SARSA

SARSA stands for State-Action-Reward-State-Action. It is an algo for learning Markov decision process policy. It starts by giving the agent a policy and is an ON-policy algorithm for TD-learning (Temporal Difference).

DDPG

DDPG stands for Deep Deterministic Policy Gradient and is a model-free off-policy. It combines ideas from DQN and DPG and is an algo for learning continuous actions.

Real-World Applications of Reinforcement Learning (2026 Update)

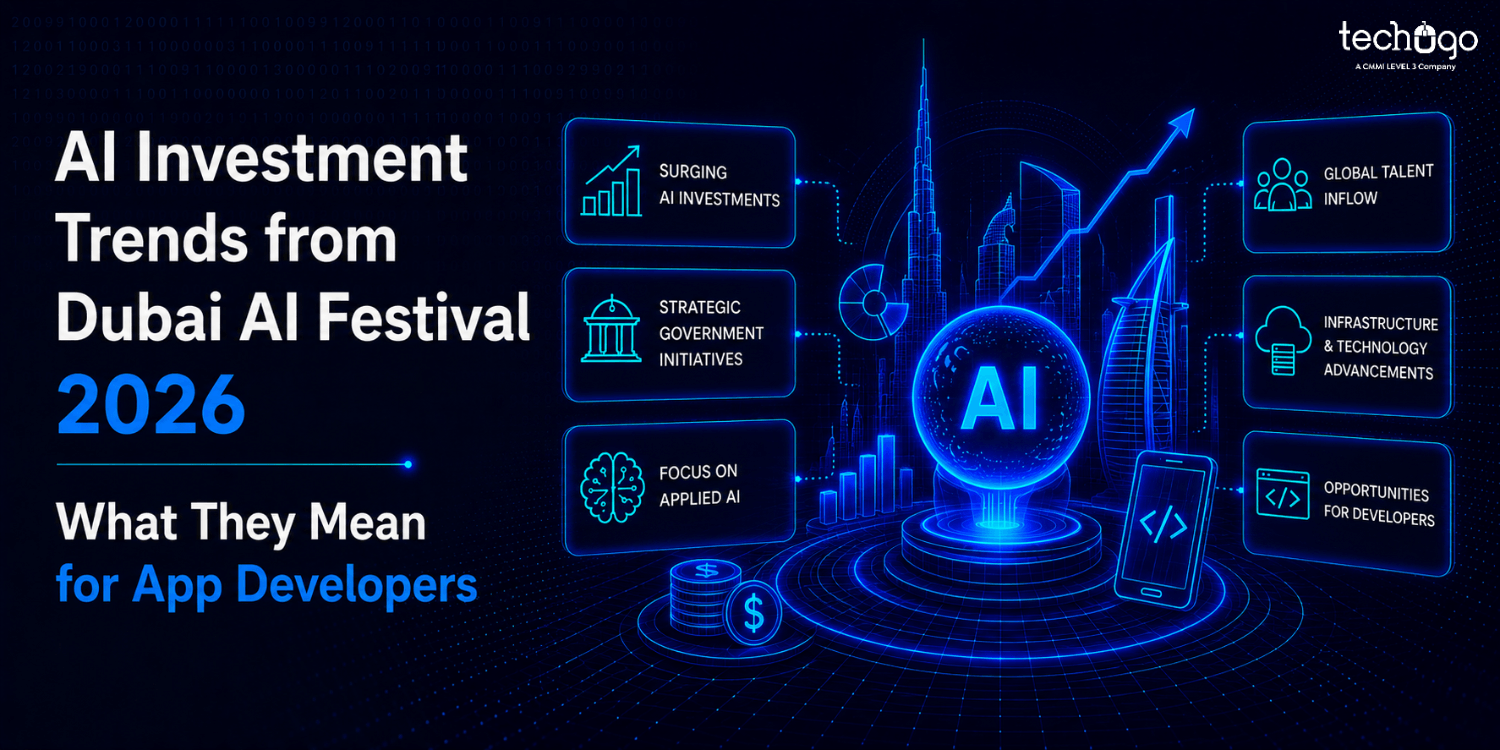

According to a report by Statista, the global AI market is expected to cross $500 billion by 2028, with reinforcement learning playing a key role in automation, robotics, and decision intelligence systems.

While reinforcement learning was once limited to research environments, it has now moved into real-world business applications across multiple industries. Its ability to learn from dynamic environments and make sequential decisions makes it highly valuable.

Let’s explore where RL is making a real impact:

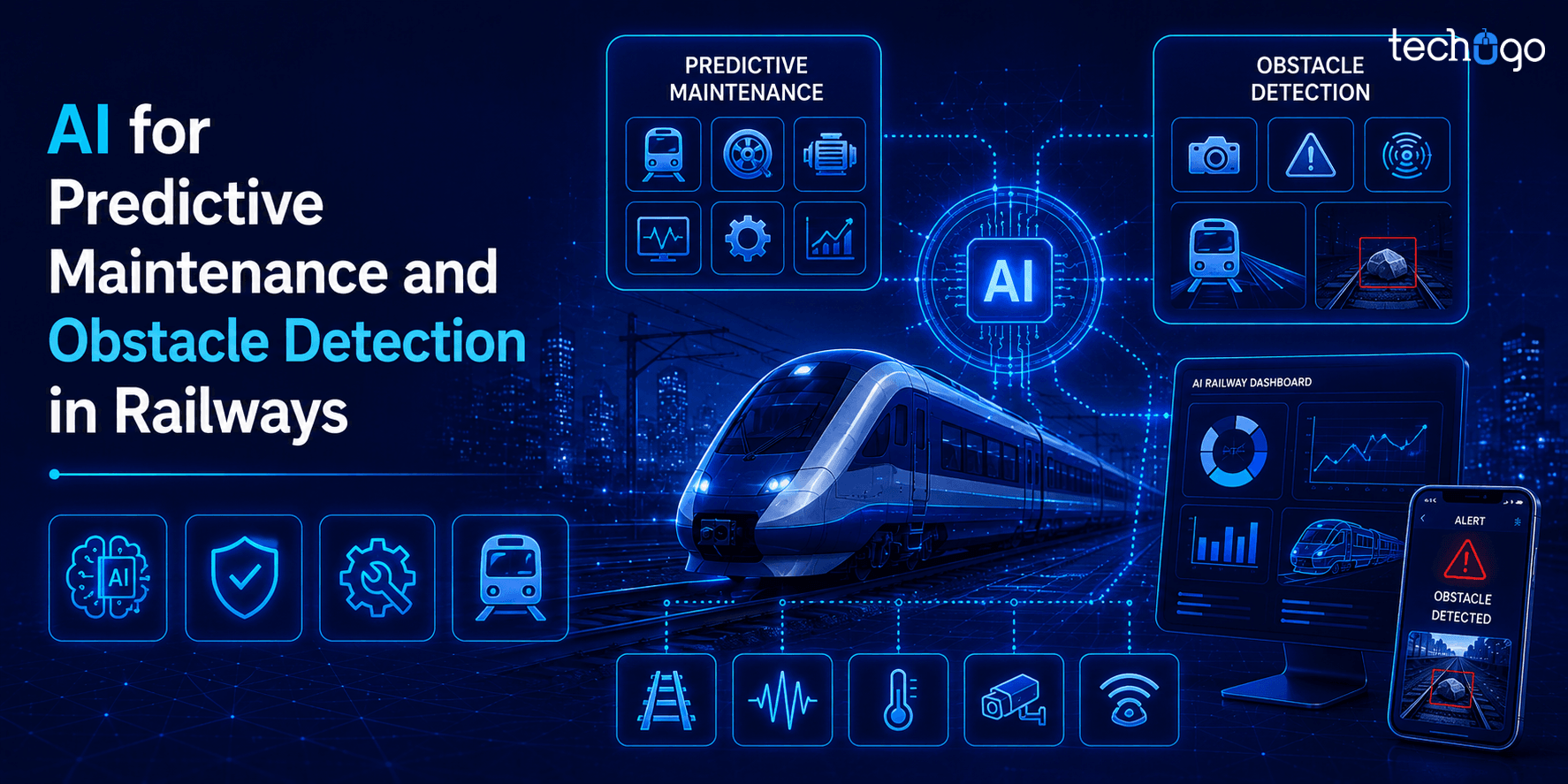

1. Autonomous Vehicles

Reinforcement learning plays a critical role in self-driving systems. It helps vehicles learn from real-time scenarios such as traffic conditions, pedestrian movement, and road obstacles. Over time, the system improves its decision-making, leading to safer and more efficient navigation.

2. Healthcare & Treatment Optimization

In healthcare, RL is being used to design personalized treatment plans. It can analyze patient responses over time and adjust treatments accordingly. It is also being explored in robotic surgeries and drug discovery, where precision and adaptability are crucial.

3. Finance & Algorithmic Trading

RL models are widely used in financial markets to optimize trading strategies. These systems learn from market patterns, risks, and rewards to make better investment decisions. Unlike traditional models, RL adapts continuously to changing market conditions.

4. Gaming & Advanced AI Systems

Reinforcement learning gained popularity through gaming environments. Today, AI models trained using RL can outperform human players in complex games. These systems continuously learn strategies, improve performance, and adapt to new challenges.

5. Supply Chain & Logistics Optimization

Businesses are using RL to optimize delivery routes, warehouse operations, and inventory management. It helps reduce costs, improve efficiency, and respond dynamically to demand fluctuations.

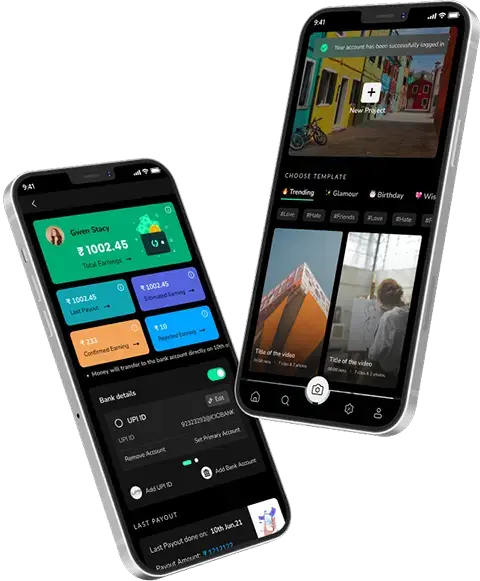

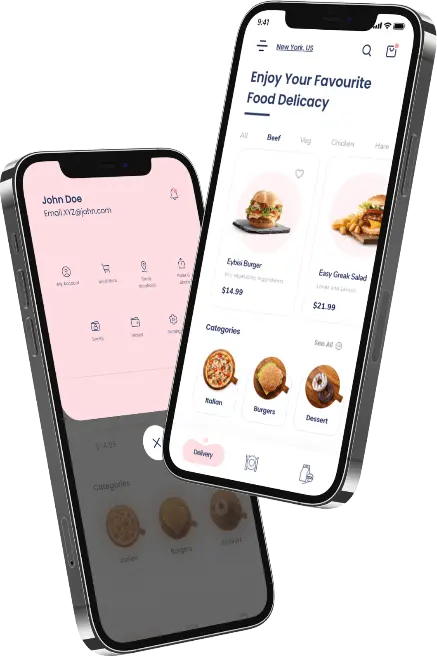

6. Recommendation Systems

Streaming platforms and eCommerce businesses use RL to personalize user experiences. By learning user behavior over time, RL models suggest more relevant content, products, and services, increasing engagement and conversions.

7. Robotics & Industrial Automation

RL enables robots to learn tasks through trial and error, especially in manufacturing environments. From assembly lines to quality inspection, it improves precision and reduces human intervention.

The Other Two Paradigms of Machine Learning

As mentioned earlier, RL isn’t the only tech falling under the ambit of ML. There are two other learnings (so to say). Let us get to know about them, before wrapping up! Goes without saying that both the following are a subset of AI and ML.

– Supervised Learning

Here, machines are trained using well-labelled training data – something that SL, as a name, signifies. On the basis of this training data, the machines then predict the outcome/output.

SL involves providing input data as well as correct output data to the ML model. The aim is to map the input variable with the output variable.

– Unsupervised Learning

As the name suggests, it does not involve any supervision and the models are not supervised using training dataset. The models themselves find the hidden patterns from the data given. UL algorithms first self-discover any naturally occurring patterns in a dataset and thus it is considered more important.

It is so because in real-world, input data with a corresponding output isn’t always available. Therefore, to solve such cases, unsupervised learning is required.

Challenges in Implementing Reinforcement Learning

Even though reinforcement learning sounds powerful and promising, the reality is a bit more layered, and sometimes, honestly, a little complicated. It is not just about training a model and expecting it to perform well, because the process involves multiple moving parts that need to work together.

Let’s break down some of the key challenges, and why they matter:

1. High Data Requirements

Reinforcement learning models depend heavily on data, and not just any data, but continuous interaction data. The system needs to learn from repeated actions and outcomes, so that it can improve over time.

But the issue is, collecting this much data is not always easy, and in many cases, it becomes expensive and slow. Therefore, businesses often struggle at this stage itself.

2. Training Time and Computational Cost

Training an RL model is not quick, and it was never meant to be. It takes time, and also requires strong computational power, because the model keeps learning through trial and error.

So, the more complex the environment is, the longer it takes. And yes, the costs— they increase too, sometimes unexpectedly.

3. Exploration vs Exploitation Dilemma

This is one of the core challenges in reinforcement learning. The model has to decide whether it should try something new (exploration), or stick to what already works (exploitation).

But balancing this is tricky, because if the model explores too much, it may never stabilize. And if it exploits too early, it might miss better opportunities. So, the decision-making becomes… uneven at times.

4. Designing Real-World Environments

For RL to work properly, the environment needs to be well-defined, and realistic. But creating such environments is not always straightforward.

Sometimes the environment is too simple, and sometimes it does not reflect real-world complexity. Because of this, the model learns patterns that may not actually work outside that setup.

5. Delayed Rewards Problem

In many reinforcement learning scenarios, rewards are not immediate, and that creates confusion. The agent performs several actions, and only later receives feedback.

So, it becomes difficult to understand which action actually led to the result. And therefore, the learning process slows down… or gets slightly misaligned.

6. Scalability Issues

As systems grow, and as the data increases, scaling RL models becomes a challenge. What works in a controlled setup does not always work in larger, real-time environments.

So yes, performance can drop, and optimization becomes necessary—again and again.

7. Safety and Risk Concerns

In areas like healthcare, finance, or autonomous driving, the margin for error is very small. A wrong decision is not just a mistake, it can lead to serious consequences.

Because of this, reinforcement learning systems need to be carefully designed, tested, and monitored. And even then, there are risks involved.

Because these challenges are real, and they have been observed across industries, many businesses prefer working with an experienced AI app development company. This helps in managing complexity, reducing risks, and building solutions that are actually scalable… and usable in the long run.

Come and Build a Bright Future with Us!

Reinforcement learning, without a doubt, is a cutting-edge technology that has a lot to offer in the future. Since it falls under the ambit of Machine Learning, experts of the latter can help build RL-solutions and work on the related algorithms.

So, if you are also a far-sighted person with business antics, then you know how promising the future is for businesses that are tech-laced! Therefore, you must not keep sitting on that idea of yours to build something extraordinary.

Make the best out of the wonderful opportunities knocking at your door – do not shoo them away because they are technical. We are here to offer help for that.

So, connect with us at Techugo to explore the AI/ML domain like never before.

Get in touch

We'd love to hear from you.

SA

SA  KW

KW  IE

IE AU

AU UAE

UAE UK

UK USA

USA  CA

CA DE

DE  QA

QA ZA

ZA  BH

BH NL

NL  MU

MU FR

FR