Write Us

We are just a call away

[ LET’S TALK AI ]

X

Discover AI-

Powered Solutions

Get ready to explore cutting-edge AI technologies that can transform your workflow!

What happens when artificial intelligence stops living inside screens and starts interacting with the real world?

That’s where physical AI comes in.

Artificial intelligence is no longer limited to apps, dashboards, or chatbots on a screen.

Instead of simply analyzing data or generating text, physical AI brings intelligence into the real world. It allows machines to see what’s around them, move through spaces, react to changes, and make decisions on the spot.

In short, physical AI allows smart software to interact with the real world.

So, what is it actually like to have AI in the physical world? Let’s explore the most impactful physical AI use cases and benefits.

Also Read: How AI Technology Will Affect Business Operations in 2026 and Beyond

Physical AI, often referred to as embodied AI, is a type of artificial intelligence that steps out of software and works through machines in the real world.

Think about it for a moment. Most AI we interact with today lives inside apps or digital platforms. It analyzes data, answers questions, or helps automate tasks. Useful, right? But it usually stays behind the screen.

Physical AI changes that.

Now AI can operate through machines that see, sense, and respond to their surroundings. Cameras, sensors, and robotics collect information from the environment. The AI system processes that information and decides what action to take next.

In other words, it’s not just thinking anymore. It’s thinking and doing.

So how does AI actually turn information into action?

It starts with sensors and cameras. These tools help machines understand what’s happening around them. They can detect movement, recognize objects, and measure distance or temperature.

Once that information is collected, the AI system analyzes it almost instantly. Then comes the important part. The machine decides what to do next.

For example, a self-driving car moving through city traffic. Its cameras and sensors help it recognize traffic lights, pedestrians, and nearby vehicles so it can navigate safely. Or imagine a drone inspecting a bridge. It scans the surface, notices tiny cracks, and reports them before they become serious problems.

This is where physical AI stands out. It doesn’t just study data, it uses that data to act in the real world.

And that ability to observe, decide, and act is what makes physical AI so powerful.

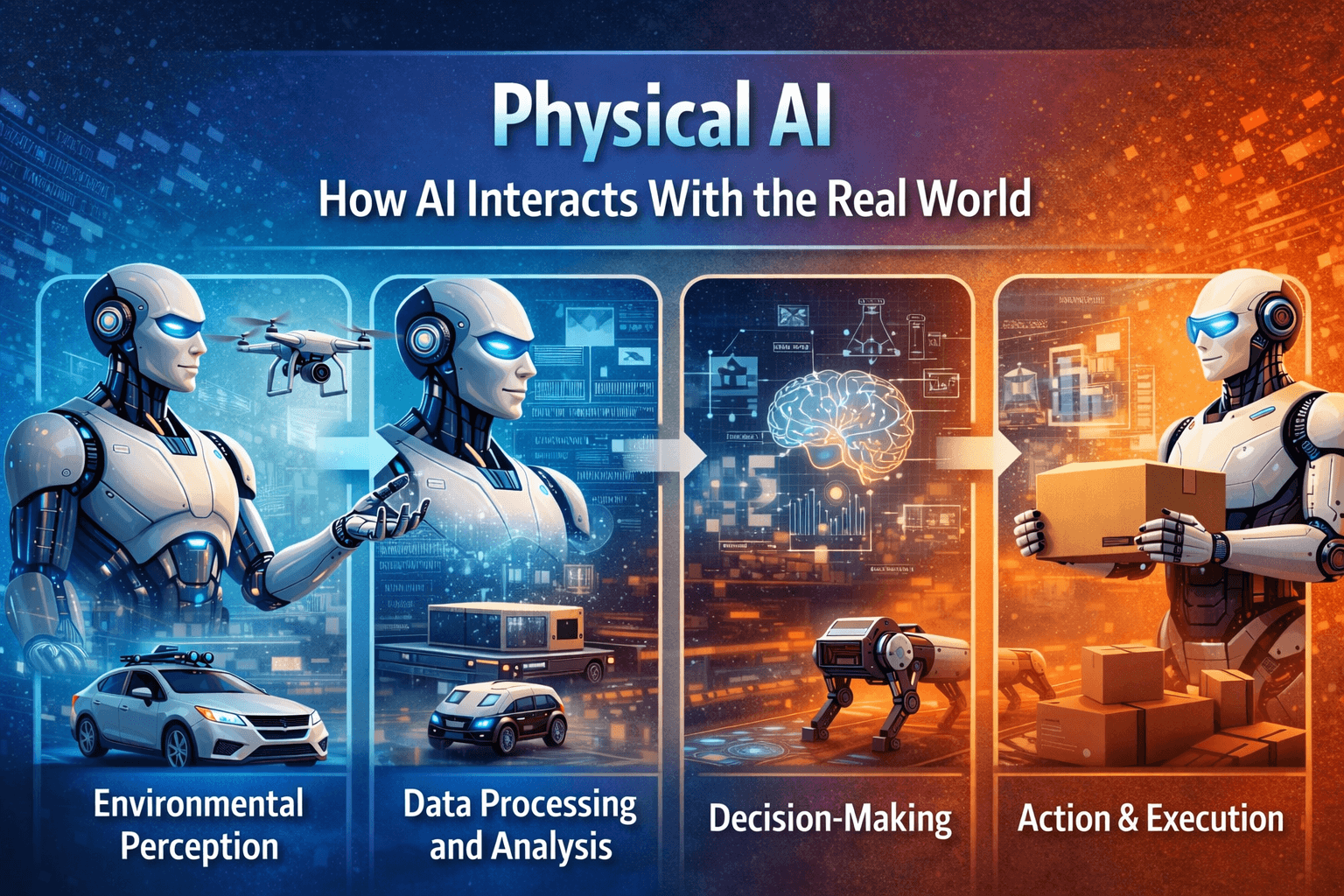

Physical AI might sound complicated at first. But when you break it down, the process is actually quite straightforward. Many systems rely on sensor-driven AI, where cameras and sensors help machines understand what is happening around them.

For machines to operate intelligently in real-world environments, they follow a simple cycle. They observe what is happening around them, interpret that information, decide what action makes sense, and then carry it out.

Each step builds on the previous one. Together, they allow machines to interact with their physical surroundings in a meaningful way.

Let’s walk through how this happens step by step.

Everything starts with awareness. Machines powered by physical AI need a way to understand what is happening around them. To do that, they rely on devices like cameras, sensors, and monitoring systems.

You can think of these tools as the machine’s eyes and ears. They capture information from the surrounding environment and send it to the AI system.

These technologies help machines detect things such as:

Take a warehouse robot moving through storage aisles, as an example. Its cameras help it identify shelves and packages, while sensors alert it if something suddenly blocks its path.

Without this perception step, the machine would have no awareness of its surroundings.

Once information is collected, the next step is understanding it.

The AI system processes the data gathered from cameras and sensors almost instantly. It analyzes patterns, identifies objects, and builds a clearer picture of the environment.

In simple terms, the machine is trying to interpret what it is seeing.

For instance, an inspection drone scanning a bridge might capture hundreds of images. The AI analyzes those images to detect cracks, corrosion, or other structural issues that might require attention.

This stage turns raw sensor data into useful insights that the machine can actually work with.

After the environment is understood, the system must decide what to do next.

AI models evaluate the situation and determine the most appropriate response based on the available information. These decisions are usually made in real time.

Consider a self-driving vehicle approaching a pedestrian crossing. The system quickly analyzes the situation and decides whether to slow down, stop, or change direction.

This process happens incredibly fast. Often within milliseconds.

In many ways, it resembles human decision-making. The machine evaluates the situation, considers possible outcomes, and chooses the safest or most efficient action.

The final stage is where intelligence turns into real-world action.

Once the AI system decides what needs to be done, the machine carries out the task. This could involve movement, physical interaction, or automated operations depending on the machine’s role.

For example, a warehouse robot may pick up a package and move it to the correct shelf. A farming robot might detect which crops are ready and harvest them at the right time. Even delivery drones can adjust their flight paths mid-air to avoid obstacles and reach their destination safely.

These actions may look simple on the surface, but they are powered by a chain of intelligent decisions happening in real time.

This is what makes physical AI so powerful.

It does not just analyze information. It uses that information to interact with the world around it. When observation, analysis, decision-making, and execution all work together, machines gain the ability to operate independently and perform complex tasks with impressive accuracy.

Not too long ago, most machines used in industries were designed for simple automation.

They followed fixed instructions and repeated the same task over and over again. Assembly lines, conveyor belts, and early industrial robots helped speed up production, but their abilities were limited. If something unexpected happened, these machines could not adapt or respond on their own.

Today, that picture looks very different.

With the advancement of artificial intelligence, machines are no longer restricted to rigid instructions. They can analyze information, respond to changes, and make decisions while performing tasks. This shift has transformed traditional automation into intelligent systems that can interact with real-world environments. In many industries, machines are slowly moving from being tools that follow commands to systems that can observe and react to situations.

A big reason behind this transformation is the development of powerful technologies such as advanced sensors, computer vision, and machine learning.

Key technologies that power physical AI include:

Together, these technologies enable machines to observe, analyze, and respond to real-world situations more effectively.

Because of these advancements, industries across the world are increasingly adopting AI-powered machines.

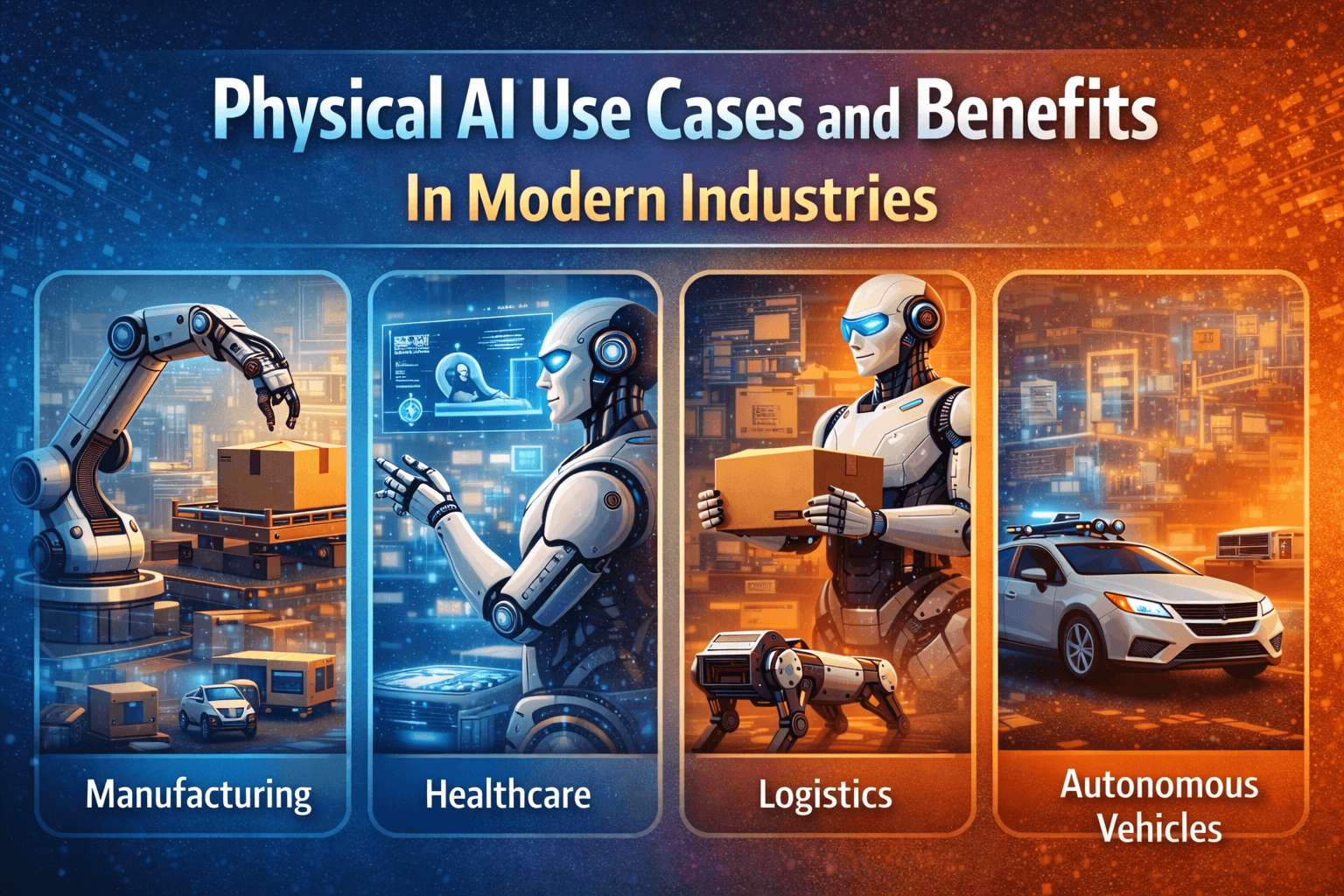

Manufacturing: Manufacturing companies are already using intelligent robots to make production smoother and reduce mistakes on the factory floor.

Agriculture: Farming is changing as well. In agriculture, AI-powered machines help farmers keep a closer eye on their crops, spot problems early, and even handle harvesting.

Logistics: Warehouses have become far more dynamic. In logistics, warehouse robots and delivery drones are making it easier to move goods, track inventory, and speed up deliveries.

What’s interesting is that these technologies are doing more than just improving productivity. They’re also helping businesses make quicker and smarter decisions based on real-time information.

As physical AI continues to develop, industries are gaining machines that can do far more than follow simple instructions. These systems can observe their surroundings, understand what’s happening, and respond when needed.

And that shift is slowly changing how modern industries operate, opening the door to a new generation of intelligent machines.

Physical artificial intelligence is bringing intelligence into machines that can operate in the real world. Instead of simply processing data, these systems can sense their surroundings and respond to what is happening around them.

Let’s take a closer look at some of the major benefits physical AI brings to modern industries.

One of the biggest advantages of physical AI is improved efficiency. Robots powered by AI can process repetitive tasks within a short period of time. Robots assist in factories and warehouses in the transportation of materials, sorting, and in the assistance of production lines. Workflows become smoother. Delays are reduced. The teams are able to work on more complicated work.

Physical AI is also assisting in making work places safer. Machines have the potential to replace some of the risky jobs in the industry that require the use of heavy machinery or work in dangerous conditions. The robots can check the equipment, deal with hazardous substances, or operate in places that can be not safe to humans.

The second major advantage is that one can work longer hours without experiencing fatigue. AI-driven systems will be able to maintain day and night operations. This assists firms to be productive and handle the peak demand in an effective manner.

Robots that are driven by AI are able to tackle their jobs with a high degree of accuracy. Computer vision and sensors assist in the creation of small defects in the manufacturing process. This enhances better quality of the product and minimizes expensive mistakes.

AI systems continuously receive data related to operations in the form of sensors and monitoring tools. This data can be analyzed by the businesses to comprehend their trends, observe problems, and enhance the processes with time.

After these systems have been found to be effective, firms can replicate them to other plants. At this point, physical environment interaction comes in particularly handy. Machines can be flexible to fit in various places yet they are able to do the same work effectively.

It is understandable why an increasing number of industries start to implement physical AI in their routine with the benefits of this nature.

Physical AI is no longer limited to research labs. It is already being used in places where real work happens every day. Factories, hospitals, farms, and construction sites are starting to rely on machines that can observe their surroundings and respond to them.

This shift reflects the growing presence of AI in the physical world, often powered by sensor-driven AI that uses cameras and sensors to understand what is happening around it.

Here are some major ways physical AI is being used across industries:

Factories were some of the first places to adopt physical artificial intelligence. Walk through a modern production floor and you will notice robots working beside people. They assemble parts.

They handle repetitive tasks all day. When a product moves forward, there is always that small check in the back of the mind. Did it go through correctly? The system verifies it instantly and keeps the line moving.

Even in hospitals, embodied AI is helping doctors in ways that once felt like science fiction.

Surgical robots assist doctors during delicate procedures where steady hands really matter. They help make small, precise movements that can be hard to manage manually.

AI-powered tools also help doctors take a closer look at medical scans. Sometimes it’s the smallest detail that matters. Something easy to miss can now be spotted earlier.

Self-driving vehicles show what AI in the physical world really looks like. Cameras scan the road, sensors track movement, and the system constantly checks its surroundings.

It slows down, adjusts, and keeps learning from what it sees. This constant physical environment interaction helps the vehicle respond to traffic, obstacles, and sudden changes on the road.

Construction sites are busy places. A lot can change in a single day.

That is where intelligent machines and drones help. They scan large areas and capture images from above. They track progress across the site. If something looks off, teams can catch it early. A small fix today can prevent a bigger problem tomorrow.

These examples show how physical AI is already shaping real-world work across industries.

From factory floors to farms and even space missions, physical AI is showing up in more places than we once imagined.

And this is only the beginning of how machines will work alongside us in the real world.

As exciting as physical AI sounds, bringing it into real workplaces is not always simple.

Behind every smart machine is a lot of planning, investment, and adjustment. Companies often discover that adopting new technology comes with a few practical challenges along the way.

Here are some of the common challenges businesses face when implementing physical AI in real-world operations:

One of the first hurdles is cost. Building or buying machines powered by physical AI can require a large upfront investment.

Robots, sensors, software systems, and infrastructure all add up. For many organizations, the question naturally comes up. Is it worth the cost right now? Over time, the efficiency gains can balance things out, but the initial step can still feel like a big leap.

These systems constantly collect and process data from their surroundings. Cameras, sensors, and connected devices are always gathering information to make decisions.

While this helps machines work better, it also raises concerns about how that data is stored and protected. Businesses need strong safeguards in place to make sure sensitive information stays secure.

Adding physical AI into an existing workflow is not always plug-and-play. Many companies already rely on older systems and equipment.

Connecting new intelligent machines with those systems can take time and careful planning. Sometimes it feels like trying to fit a very modern tool into an older setup.

Smart machines also need regular attention. Sensors need calibration, software needs updates, and hardware may need repairs from time to time.

Keeping everything running smoothly requires skilled technicians and ongoing maintenance.

Perhaps the biggest shift happens on the human side. When new technology enters the workplace, employees often need time to adapt.

Workers may need training to operate, monitor, or collaborate with AI-powered machines. The goal is not to replace people, but to help teams learn new skills and work alongside smarter tools.

Even with these challenges, many organizations continue moving forward with physical AI. With the right planning and support, the benefits often outweigh the difficulties.

What actually makes a digital product feel truly smart? The answer often lies in the technology, and more importantly, the team behind it.

That’s the kind of approach Techugo takes.

As a trusted AI development company, Techugo helps businesses turn AI ideas into real, working solutions. Instead of making AI sound overly technical, the team focuses on turning complex ideas into tools that actually help products work better.

Through generative AI integration services, Techugo helps businesses add features like automated content, smoother workflows, and more personalized user experiences. Nothing complicated for the user, just technology that makes every interaction feel a little smarter and a lot more effortless.

Physical AI is a type of artificial intelligence that works through machines in the real world. Instead of staying inside software systems, it powers robots, smart equipment, and autonomous machines that can sense their surroundings and respond to what is happening around them.

Most physical AI systems follow a simple cycle. They observe their surroundings using sensors and cameras. Then the AI processes that information, decides what action makes sense, and carries it out through a machine or robot.

Physical AI is already showing up in many industries. Factories use intelligent robots on production lines. Hospitals use surgical robots and AI-powered diagnostic tools. Self-driving vehicles rely on AI to navigate roads. Even farms now use drones and smart tractors to monitor crops and manage fields.

One big reason is efficiency. Machines powered by AI can work continuously, handle repetitive tasks, and perform certain operations with high precision. This helps businesses improve productivity, maintain quality, and support workers in complex tasks.

Write Us

sales@techugo.comOr fill this form